Elastic Container Service (ECS): My default choice for containers on AWS

Amazon Elastic Container Service is the default AWS service I reach for whenever I need to run containers. Whether it's a batch processing pipeline that fans out across hundreds of Fargate tasks or a FastAPI backend sitting behind an Application Load Balancer, ECS handles the orchestration without the operational complexity of Kubernetes. The control plane is free, the AWS integration is deep, and as of early 2026, the deployment capabilities rival anything in the container ecosystem.

I recently presented on ECS and decided to write down the things I have learned from building real projects into one place. This blog post is the companion to that presentation - a deep dive into what ECS offers, how I use it, and how you can start building with it today.

Why Containers?

Before we talk about ECS specifically, let's talk about why containers matter. Four core principles make containers compelling:

- Consistency - The same image runs identically on your laptop, in CI, and in production. No more "works on my machine."

- Isolation - Each container gets its own filesystem, networking, and process space. Multiple services on the same host without conflicts.

- Efficiency - Containers share the host OS kernel. Startup in seconds, not minutes. Far less overhead than virtual machines.

- Portability - A Docker image runs on ECS, EKS, Lambda, or your own servers. Your business logic stays runtime-agnostic.

In my Aurora DSQL Kabob Store project, I made this a deliberate design decision - keeping business logic runtime-agnostic so the same FastAPI application could deploy on Fargate, EC2, EKS, or Lambda with minimal adapter code.

What is Amazon ECS?

ECS is a fully managed container orchestration service. You define what to run and how, and ECS handles placement, scaling, availability, and integration with the rest of AWS.

Four things make ECS stand out:

- No control plane cost - Unlike EKS (~$75/month per cluster), the ECS orchestration layer is completely free. You only pay for the compute your containers use.

- Deep AWS integration - IAM roles per task, CloudWatch Container Insights, native ALB target groups, Secrets Manager injection, and tight integration with every major AWS service.

- Flexible compute - Choose between Fargate (serverless), EC2 (self-managed), or the new Managed Instances (AWS-managed EC2).

- Deployment sophistication - Rolling updates, native blue/green, canary, and linear deployments all built in.

Core Concepts

Five building blocks make up ECS:

Cluster - A logical grouping of tasks and services. Think of it as your namespace. A cluster can span Fargate, EC2, and Managed Instances simultaneously.

Task Definition - The blueprint. A JSON document that specifies container images, CPU, memory, networking mode, volumes, IAM roles, and logging configuration. Versioned - each registration creates a new revision (e.g., my-app:3).

Task - A running instance of a task definition. One or more containers working together. On Fargate, each task gets its own elastic network interface and private IP.

Service - Maintains a desired count of tasks. Handles replacement of failed tasks, load balancer registration, auto scaling, and deployments.

Container Instance - An EC2 instance running the ECS agent, registered to a cluster. Only relevant if you're using the EC2 launch type.

Compute Options

ECS gives you four ways to provide compute for your containers. Choosing the right one depends on your workload characteristics, cost sensitivity, and operational preferences.

Fargate (Serverless) - The Default Choice

Fargate is what I recommend for most workloads. With it, there are no EC2 instances to manage. You specify CPU and memory at the task level, and AWS handles everything underneath.

| CPU (vCPU) | Memory Options | Notes |

|---|---|---|

| 0.25 | 512 MiB, 1-2 GB | Linux only |

| 0.5 | 1-4 GB | Linux only |

| 1 | 2-8 GB | Linux and Windows |

| 2 | 4-16 GB | Linux and Windows |

| 4 | 8-30 GB | Linux and Windows |

| 8 | 16-60 GB | Linux only |

| 16 | 32-120 GB | Linux only |

Fargate supports both x86_64 and ARM64 (Graviton) architectures. Graviton gives you roughly 20% better price-performance for most workloads. Pricing is per-second based on vCPU and memory consumed.

Fargate Spot offers up to 70% savings for fault-tolerant workloads. When AWS reclaims capacity, tasks receive a SIGTERM with a 2-minute warning. I use this for batch processing jobs where interruption just means retrying one file.

EC2 Launch Type

This offers full control over the underlying instances. You choose the AMI, instance type, and manage patching and scaling yourself. Choose EC2 when you need GPUs, custom AMIs, specific instance families, or when sustained high utilization makes reserved instances cheaper than Fargate.

The trade-off is clear: more control, more operational burden.

ECS Managed Instances

Launched in September 2025, Managed Instances bridge the gap between Fargate simplicity and EC2 flexibility. AWS handles provisioning, auto-scaling, Bottlerocket OS patching (14-day cycles), and host replacement. You control instance type selection via attribute-based selection - say "I need 4 GPUs" and ECS picks the right instance.

The "start before stop" principle for host replacement is particularly nice - new capacity comes up before old goes down, maintaining availability throughout.

This is the answer for GPU workloads and ML inference where Fargate isn't an option but you don't want to manage EC2 fleets.

Capacity Providers

Capacity providers are the recommended way to configure compute. The strategy uses two parameters:

- Base - Minimum tasks guaranteed on a specific provider (only one provider can have a base)

- Weight - Relative proportion of tasks after the base is filled

Example: base 2 on FARGATE, weight 4 on FARGATE_SPOT, weight 1 on FARGATE. Your first 2 tasks are guaranteed to use on-demand Fargate. After that, 4 out of every 5 new tasks go to Spot. Cost optimization with a reliability floor.

Task Definitions - The Blueprint

The task definition is where you define everything about your containers. Here are the critical parameters:

Container Definitions

Each task definition contains one or more container definitions. Key parameters include:

- image - Docker image from ECR (Elastic Container Registry), Docker Hub, or any private registry

- essential - If an essential container stops, the entire task stops. Your main app is essential; your log router sidecar might not be

- portMappings - Container ports, with named ports for Service Connect

- healthCheck - CMD-SHELL command with configurable interval, timeout, retries, and start period

- dependsOn - Container startup ordering with conditions: START, COMPLETE, SUCCESS, HEALTHY

- restartPolicy - Container-level restarts without killing the entire task. Configurable attempt period (60-1800 seconds) and ignored exit codes

Task Role vs Execution Role

This distinction trips people up:

| Task Role | Execution Role | |

|---|---|---|

| Purpose | Permissions for your application code | Permissions for the ECS agent |

| Used by | Your containers calling AWS APIs | ECS pulling images, pushing logs, fetching secrets |

| Example | S3 read/write, DynamoDB access | ecr:GetAuthorizationToken, logs:CreateLogStream, secretsmanager:GetSecretValue |

Two different roles, two different purposes. The task role follows least privilege for your application. The execution role is about infrastructure plumbing.

Secrets Injection

ECS natively injects secrets as environment variables from Secrets Manager or SSM Parameter Store:

{

"secrets": [

{

"name": "DB_PASSWORD",

"valueFrom": "arn:aws:secretsmanager:us-east-1:123456789:secret:my-secret"

},

{

"name": "API_KEY",

"valueFrom": "arn:aws:ssm:us-east-1:123456789:parameter/my-param"

}

]

}

Secrets Manager supports specific JSON keys (arn:...secret:my-secret:username::) and version staging. Never bake secrets into container images or task definitions.

Networking

Networking Modes

| Mode | Description | Use With |

|---|---|---|

| awsvpc | Each task gets its own ENI and private IP. Per-task security groups. Required for Fargate. | Fargate, EC2, Managed Instances |

| bridge | Docker's virtual network. Dynamic port mapping with ALB. | EC2 only |

| host | Containers use host's network directly. No port isolation. | EC2 only |

| none | No external networking. | EC2 only |

Recommendation: Use awsvpc unless you have a specific reason not to. It's the only mode that works everywhere and gives you per-task security groups.

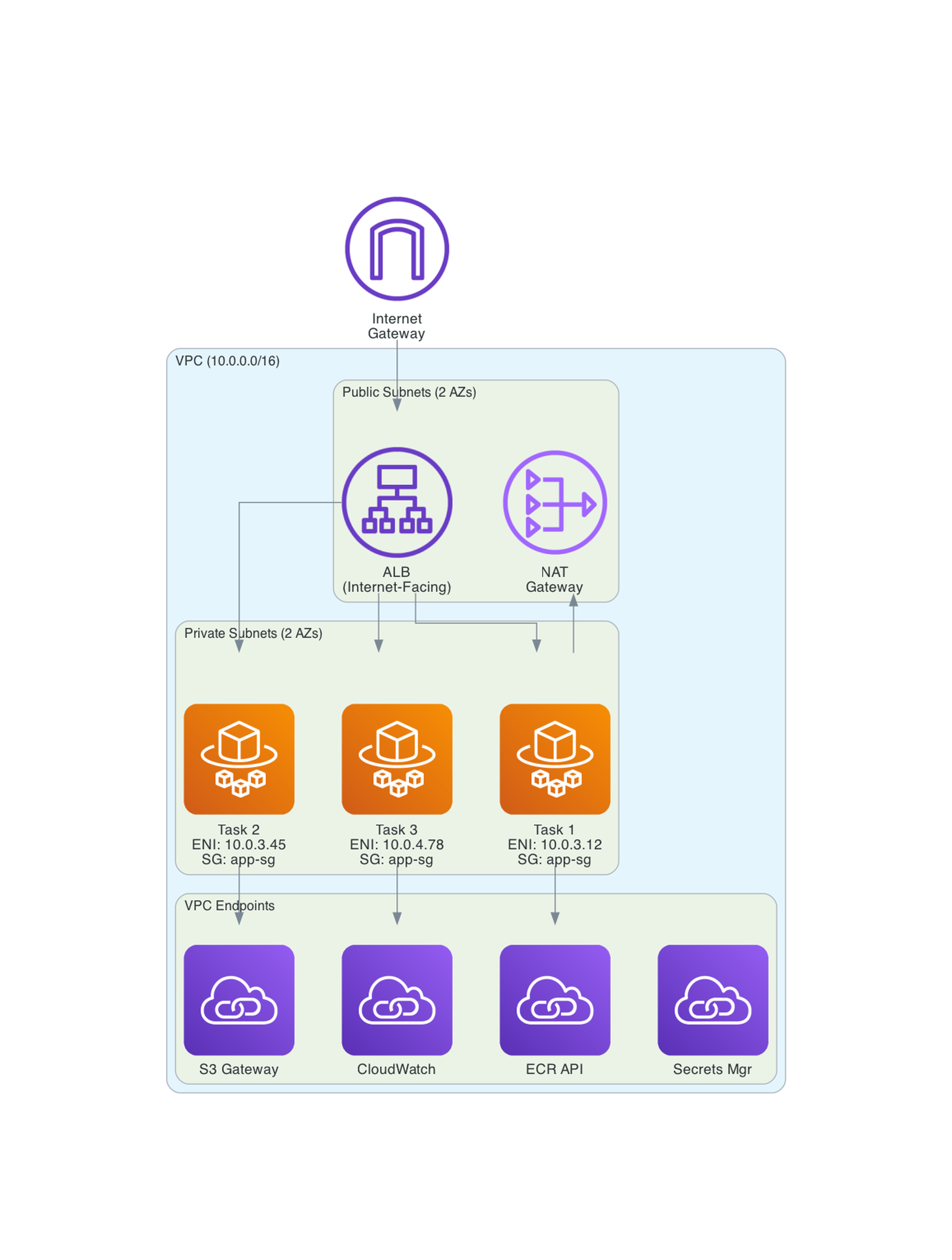

VPC Architecture

For production workloads, run ECS tasks in private subnets. Use VPC endpoints for ECR, S3, and CloudWatch to avoid NAT gateway data transfer costs. This is the biggest hidden cost in ECS architectures - NAT gateways charging for every image pull and log push.

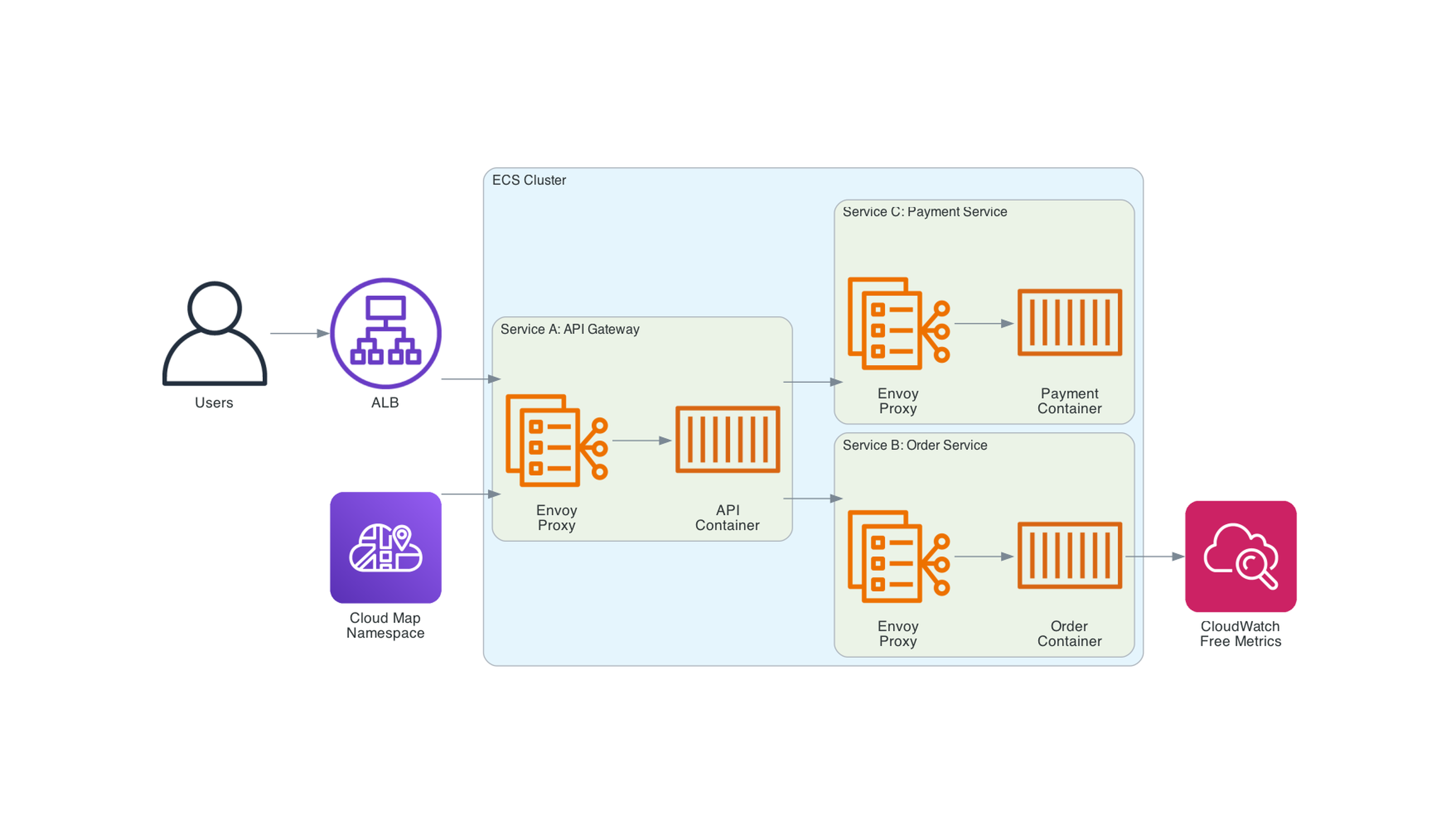

Service Connect

Service Connect is the recommended way to handle service-to-service communication. It automatically injects an Envoy proxy as a sidecar, providing:

- Service discovery via Cloud Map namespaces

- Client-side load balancing with retries and outlier detection

- Free application-level traffic metrics in CloudWatch (request count, latency, error rates)

- Support for HTTP, HTTP2, gRPC, and TCP

- Per-request Envoy access logs (October 2025)

Service Connect replaces AWS App Mesh, which will be discontinued in September 2026.

Deployment Strategies

ECS has the most sophisticated deployment options of any container orchestrator on AWS. As of March 2026, four strategies are available natively.

Rolling Update (Default)

Gradually replaces old tasks with new ones. Controlled by:

- minimumHealthyPercent (default 100%) - Minimum tasks that must remain running

- maximumPercent (default 200%) - Maximum tasks allowed during deployment

For zero-downtime with a desired count of 4: min 100%, max 200% means ECS starts 4 new tasks, waits for them to be healthy, then stops the 4 old tasks.

Blue/Green

Built into ECS without CodeDeploy dependency. Provisions 100% new capacity ("green"), validates, then shifts all production traffic at once. Key features:

- Six Lambda lifecycle hooks: pre-scale-up, post-scale-up, test traffic shift, production traffic shift, post-test, post-production

- Configurable bake time for instant rollback window

- Works with ALB, NLB, and Service Connect

Canary (October 2025)

Two-stage deployment: shift a small percentage of traffic first (configurable from 0.1% to 99.9%), validate with real production traffic, then shift the rest. Ideal for critical user-facing services where you want to limit blast radius.

Linear (October 2025)

Gradual traffic shift in equal increments as small as 3%, with configurable bake time between each step. The most conservative approach - allows monitoring at each increment.

Deployment Circuit Breaker

The safety net across all strategies. If tasks keep failing to start or pass health checks, ECS automatically stops the deployment and optionally rolls back to the last successful version. You can wire CloudWatch Alarms into the circuit breaker to detect application-level failures, not just infrastructure failures.

Service Auto Scaling

ECS uses Application Auto Scaling with four policy types:

- Target Tracking - Set a target metric value (e.g., CPU at 50%). Simplest to configure - works like a thermostat.

- Step Scaling - Define explicit threshold/action pairs. React differently at different severity levels.

- Scheduled Scaling - Time-based. Scale up for business hours, down at night. Supports scaling to zero (set minimum capacity to 0).

- Predictive Scaling - ML-based. Analyzes historical patterns and proactively scales before demand hits. Doesn't trigger scale-ins on its own - pair with target tracking.

Important behavior: scale-in is automatically paused during deployments to protect availability.

Storage Options

| Storage | Persistence | Shared | Use Case |

|---|---|---|---|

| Ephemeral | Task lifetime | Within task | Temp files, caches. Default 20 GiB, up to 200 GiB on Fargate |

| EFS | Persistent | Across tasks | Shared config, models, content. Multi-AZ, IAM auth |

| EBS | Configurable | Single task | High-IOPS data processing. One volume per task |

| Bind Mounts | Task lifetime | Within task | Container-to-container data sharing |

EFS is the most versatile - persistent, shared across tasks, supports IAM authorization and transit encryption. EBS is for high-performance block storage when EFS throughput is insufficient. One gotcha with EFS though is that it can take minutes to create a new EFS but this is typically a one time thing for a given application.

Security

Security in ECS follows the principle of least privilege at the task level:

- Task roles - Each task definition gets its own IAM role. Your batch processor gets S3 and SQS access. Your API gets DynamoDB access. Not a shared instance profile.

- Secrets injection - Secrets Manager and SSM Parameter Store values injected as environment variables at startup.

- Network isolation - awsvpc mode gives each task its own security group. Run tasks in private subnets.

- Read-only root filesystem - Run containers with

readonlyRootFilesystem: truefor hardening. - Image scanning - ECR enhanced scanning with Amazon Inspector continuously scans for OS and language package vulnerabilities. As of 2026, it supports minimal base images like scratch and distroless, and shows which images are running in your clusters.

Observability

CloudWatch Container Insights (Enhanced)

Container Insights provides granular metrics at the cluster, service, task, and container level. The honeycomb visualization gives you cluster health at a glance - alarm state and utilization side by side. Deployment tracking alongside infrastructure anomalies. Cross-account monitoring for unified views. Can be enabled per-cluster or account-wide.

Logging

The awslogs driver sends container logs directly to CloudWatch Logs. As of June 2025, the default mode switched from blocking to non-blocking - if the log buffer fills up, excess logs are dropped rather than blocking your application. This prioritizes availability over logging completeness.

For advanced log routing, FireLens with Fluent Bit as a sidecar routes logs to any destination - CloudWatch, S3, Elasticsearch, Datadog, Splunk. Different containers can route to different destinations.

Tracing

Deploy the AWS Distro for OpenTelemetry (ADOT) collector as a sidecar. It receives OTLP traces on port 4317 (gRPC) or 4318 (HTTP) and exports to X-Ray automatically. This replaces the legacy X-Ray daemon approach.

ECS Exec

ECS Exec lets you shell into a running container directly - the equivalent of docker exec but for tasks running on Fargate or EC2. It uses AWS Systems Manager (SSM) under the hood, so there's no need to open inbound ports or SSH. I use this all the time - it's one of the most useful ECS features IMO.

To enable it, set enableExecuteCommand: true on your service or run task call. Then:

aws ecs execute-command \

--cluster my-cluster \

--task abc123 \

--container my-app \

--interactive \

--command "/bin/sh"

This is invaluable for debugging - inspecting environment variables, checking network connectivity, verifying mounted volumes, or tailing logs inside the container. A few things to keep in mind:

- It must be enabled before the task launches - you can't retroactively enable it on already-running tasks. For services, enabling it requires a new deployment

- The task role needs SSM permissions (

ssmmessages:CreateControlChannel,ssmmessages:CreateDataChannel,ssmmessages:OpenControlChannel,ssmmessages:OpenDataChannel) - The container image needs a shell (

/bin/shor/bin/bash) - scratch and distroless images won't work - All sessions are logged to CloudWatch or S3 for audit

- Works with both Fargate and EC2 launch types

For quick diagnostics, the amazon-ecs-exec-checker script validates that your task, role, and agent are configured correctly.

Real-World Architecture: Batch Processing with Fargate

My Serverless Data Processor project demonstrates the batch processing pattern.

The architecture: S3 upload triggers a Lambda that extracts files. Step Functions distributed map fans out processing across Fargate tasks - each file gets its own container. The containers use the waitForTaskToken pattern - Step Functions passes a callback token as an environment variable, the Rust container processes the data, then calls send_task_success to signal completion.

Key details:

- Fargate at minimum specs: 0.25 vCPU, 512 MB RAM

- OpenTelemetry sidecar for CloudWatch metrics

- Container images in ECR with multi-stage Docker builds

- Written in Rust for the worker containers

- Infrastructure managed with Terraform

Here is the Step Functions integration that launches Fargate tasks:

{

"Type": "Task",

"Resource": "arn:aws:states:::ecs:runTask.waitForTaskToken",

"Parameters": {

"LaunchType": "FARGATE",

"Cluster": "${ecs_cluster}",

"TaskDefinition": "${task_def_name}",

"NetworkConfiguration": {

"AwsvpcConfiguration": {

"Subnets": ["${fargate_subnet}"],

"SecurityGroups": ["${vpc_default_sg}"]

}

},

"Overrides": {

"ContainerOverrides": [{

"Name": "store_data_processor_daily",

"Environment": [

{"Name": "TASK_TOKEN", "Value.$": "$$.Task.Token"},

{"Name": "S3_BUCKET", "Value.$": "$.BatchInput.source_bucket_name"},

{"Name": "S3_KEY", "Value.$": "$.Items[0].Key"}

]

}]

}

}

}

This pattern works for any fan-out workload - ETL, media processing, report generation, ML batch inference. Each task is independent, starts in seconds, processes its data, and terminates. You pay only for the compute time used.

I also used this same Fargate + callback token pattern in my Serverless Pizza Ordering project, where the Fargate container simulated pizza preparation and delivery - chosen over Lambda because the "AI" insisted some pizzas could take more than 15 minutes.

Real-World Architecture: API Backend on Fargate

My Aurora DSQL Kabob Store project uses ECS Fargate as an always-on API backend.

React frontend behind CloudFront, Application Load Balancer routing to FastAPI containers on Fargate, connecting to Aurora DSQL for multi-region active-active writes.

The key design decision: keep business logic runtime-agnostic. The same FastAPI application uses direct psycopg2 queries (not ORM) so it can deploy across Fargate, ECS on EC2, Lambda, or EKS with minimal adapter code.

Fargate costs about 20-30% more than equivalent EC2 on-demand for sustained workloads, but the operational simplicity during development is worth it. In practice, real migrations from Fargate to EC2 often yield smaller savings than expected - Tines reported only ~5% compute cost savings after migrating, though they saw 30% faster job processing and 10% lower P95 latency from having dedicated hardware. The biggest cost was actually the VPC infrastructure - NAT gateways at ~$2-3/day - not ECS itself. I terraform destroy when not actively developing.

Terraform Examples

Here is the core Terraform for an ECS Fargate setup, taken from my Serverless Data Processor project:

Cluster

resource "aws_ecs_cluster" "ecs_cluster" {

name = "${var.project_name}-cluster"

setting {

name = "containerInsights"

value = "enabled"

}

}

Task Definition

resource "aws_ecs_task_definition" "fargate_processor_task" {

family = var.task_definition_name

execution_role_arn = aws_iam_role.ecs_task_execution_role.arn

task_role_arn = aws_iam_role.ecs_task_role.arn

network_mode = "awsvpc"

requires_compatibilities = ["FARGATE"]

cpu = var.fargate_cpu

memory = var.fargate_memory

container_definitions = templatefile(

"${path.module}/container-definitions.json.tpl",

{

app_image = var.app_image

fargate_cpu = var.fargate_cpu

fargate_memory = var.fargate_memory

aws_region = var.aws_region

project_name = var.project_name

task_container_name = var.task_container_name

}

)

}

Container Definition Template

[

{

"cpu": ${fargate_cpu},

"essential": true,

"image": "${app_image}",

"memory": ${fargate_memory},

"name": "${task_container_name}",

"networkMode": "awsvpc",

"environment": [

{"name": "S3_BUCKET", "value": "my-bucket"},

{"name": "S3_KEY", "value": "data/input.json"}

],

"logConfiguration": {

"logDriver": "awslogs",

"options": {

"awslogs-group": "/ecs/${project_name}",

"awslogs-region": "${aws_region}",

"awslogs-stream-prefix": "${project_name}-log-stream"

}

}

},

{

"image": "public.ecr.aws/aws-observability/aws-otel-collector:latest",

"name": "aws-otel-collector",

"essential": false,

"command": ["--config=/etc/ecs/ecs-cloudwatch.yaml"],

"logConfiguration": {

"logDriver": "awslogs",

"options": {

"awslogs-group": "/ecs/${project_name}-otel",

"awslogs-region": "${aws_region}",

"awslogs-stream-prefix": "otel"

}

}

}

]

ECS Service with ALB (for always-on workloads)

resource "aws_ecs_service" "api_service" {

name = "${var.project_name}-service"

cluster = aws_ecs_cluster.ecs_cluster.id

task_definition = aws_ecs_task_definition.api_task.arn

desired_count = var.desired_count

launch_type = "FARGATE"

network_configuration {

subnets = var.private_subnet_ids

security_groups = [aws_security_group.ecs_tasks.id]

assign_public_ip = false

}

load_balancer {

target_group_arn = aws_lb_target_group.api.arn

container_name = var.container_name

container_port = var.container_port

}

deployment_circuit_breaker {

enable = true

rollback = true

}

depends_on = [aws_lb_listener.api]

}

Three resources for the core ECS setup: cluster, task definition, and service. The container definition template handles the application specifics. The full Terraform for both projects is in the GitHub repos linked at the end.

Recent Features (2025-2026)

ECS has had a remarkable year of feature launches:

- AI Developer Tools (December 2025) - ECS MCP Server for AI-assisted development and operations. Natural language commands for cluster management.

- ECS Express Mode (November 2025) - Deploy a production-ready containerized web app with just three inputs: a container image, a task execution role, and an infrastructure role. Provisions Fargate, ALB with SSL, auto scaling, monitoring, and a unique URL. Up to 25 services can share one ALB. No additional charge beyond the underlying resources.

- Canary and Linear Deployments (October 2025) - Fine-grained traffic shifting. Canary from 0.1% to 99.9%, linear in increments as small as 3%.

- Service Connect Envoy Access Logs (October 2025) - Per-request telemetry for HTTP, HTTP2, gRPC, and TCP.

- ECS Managed Instances (September 2025) - AWS-managed EC2 with Bottlerocket OS. Attribute-based instance selection for GPUs and specialized hardware.

- Native Blue/Green Deployments (July 2025) - Built into ECS without CodeDeploy. Six Lambda lifecycle hooks for testing and approval at each phase. Configurable bake time for instant rollback.

- Non-Blocking Log Driver Default (June 2025) - Prioritizes task availability over logging completeness.

ECS vs EKS - When to Use What

This is the most common question I get. Both solve the same fundamental problem - running containers reliably at scale.

| Criteria | ECS | EKS |

|---|---|---|

| Control plane cost | Free | ~$75/month per cluster |

| Learning curve | AWS-native concepts | Kubernetes concepts |

| AWS integration | Deep, native | Good, via add-ons |

| Multi-cloud | AWS only | Portable K8s manifests |

| Ecosystem | AWS tooling | Helm, ArgoCD, Istio, operators |

| Managed compute | Fargate, Managed Instances | Fargate, managed node groups |

Choose ECS when your team is AWS-focused, you want operational simplicity, you value the free control plane, and your workloads are straightforward services and batch jobs.

Choose EKS when your team knows Kubernetes, you need multi-cloud portability, you want the Kubernetes ecosystem (Helm, ArgoCD, custom operators), or you're running complex stateful workloads.

Most organizations pick based on team expertise and existing tooling, not technical limitations.

ECS vs Lambda - Containers vs Functions

Another comparison that comes up frequently:

| Criteria | ECS/Fargate | Lambda |

|---|---|---|

| Max duration | Unlimited | 15 minutes |

| Max memory | 120 GB | 10 GB |

| Startup | Seconds (image pull) | Milliseconds (warm) to seconds (cold) |

| Pricing | Per-second (vCPU + memory) | Per-invocation + duration |

| Scaling | Service auto scaling | Automatic per-request |

| Best for | Long-running, resource-heavy, always-on | Event-driven, short-lived, bursty |

In my projects I use both. Lambda for event handling - S3 triggers, API endpoints, file extraction. Fargate for heavy processing - data transformation, ML inference, container workloads that need full runtime control.

Best Practices

After building several production systems with ECS, here are the practices I've found most valuable:

- Use Fargate unless you need GPUs or specific instance types. The operational simplicity is worth the cost premium for most workloads.

- Use awsvpc networking mode everywhere. It's the only mode that works on all compute types and gives you per-task security groups.

- Enable the deployment circuit breaker with rollback. This catches failed deployments before they impact all traffic.

- Use capacity provider strategies to mix Spot and on-demand. A base of on-demand with weighted Spot gives you cost savings with a reliability floor.

- Inject secrets via Secrets Manager. Never bake them into images or pass them as plain environment variables.

- Enable Container Insights. The per-task metrics and honeycomb visualization are invaluable for debugging.

- Use Service Connect for service-to-service communication. Free traffic metrics and managed Envoy proxies with no code changes.

- Use VPC endpoints for ECR, S3, and CloudWatch. NAT gateway data transfer costs are the biggest hidden expense in ECS architectures.

- Use multi-stage Docker builds. Keep images small. A Rust binary in a scratch image is a few megabytes. A Python app in a slim image with only production dependencies.

- Define health checks in the task definition. Don't rely solely on ALB health checks - container-level health checks catch issues faster.

Pricing - You Pay for Compute, Not Orchestration

The most important thing to know: ECS orchestration is free. You only pay for the compute resources your containers consume.

- Fargate - Per-second billing for vCPU ($0.04048/hour) and memory ($0.004445/GB/hour). Spot is up to 70% less. Compute Savings Plans can reduce costs by up to 49% (3-year all-upfront) or ~20% (1-year no-upfront).

- EC2 - Standard instance pricing. Use Savings Plans or Reserved Instances for sustained workloads.

- Managed Instances - EC2 instance pricing plus a management fee for automated provisioning, patching, and host replacement.

Hidden costs to watch:

- NAT gateways - $0.045/GB for data processed. Use VPC endpoints.

- ALB - Fixed hourly cost plus per-LCU. Up to 25 ECS Express Mode services can share one ALB.

- ECR storage - $0.10/GB/month. Use lifecycle policies to clean up old images.

- Ephemeral storage - Fargate charges $0.000111/GB/hour above the default 20 GiB.

Cost optimization strategies:

- Right-sizing - The single biggest lever. Reducing from 1 vCPU/2GB to 0.5 vCPU/1GB can yield ~45-50% lower Fargate task cost.

- Scheduled shutdowns - Running dev/staging environments only during business hours (8 hours/day, 5 days/week) can reduce costs by over 75%.

- Savings Plans - Commit to consistent usage for 1-3 years. Even no-upfront 1-year plans save ~20% on Fargate.

For my projects, the ECS cost has been minimal. The batch processor runs tasks for seconds at minimum specs. The Kabob Store's main cost was VPC infrastructure ($2-3/day), not ECS.

Things to Know

A few operational details worth keeping in mind:

- Task placement - Fargate handles placement automatically. For EC2, use the

binpackplacement strategy to consolidate workloads on fewer instances and reduce waste. - Task recycling - Fargate tasks on platform version 1.4.0+ are recycled after 14 days of continuous running. Your service will gradually replace old tasks.

- ENI limits - In awsvpc mode on EC2, each task needs an ENI. Enable ENI trunking to increase density (requires CloudFormation custom resources).

- Image pull time - Large images slow task startup. Keep images lean. Set

ECS_IMAGE_PULL_BEHAVIOR=prefer-cachedon EC2 instances to use cached images when available. - Spot instance draining - For EC2 Spot instances, set

ECS_ENABLE_SPOT_INSTANCE_DRAINING=trueon the ECS agent for graceful task termination. - Service quotas - Default Fargate vCPU quota is 6 on new accounts (up to 4,000 in production). Request increases proactively.

- Force new deployment - If you update a secret or parameter store value, the running tasks won't pick it up automatically. Force a new deployment to refresh.

Wrapping Up

ECS is the container orchestration service I use most on AWS. The free control plane, deep AWS integration, and flexible compute options make it the right choice for most container workloads that don't require Kubernetes-specific tooling.

The recent feature launches have been particularly impressive - native blue/green without CodeDeploy, canary and linear deployments, Managed Instances for GPU workloads, and Express Mode for rapid prototyping. Combined with Fargate's serverless simplicity and Service Connect's built-in service mesh, ECS has matured into a comprehensive platform for running containers at any scale.

I've used it for batch data processing with Step Functions fan-out, pizza ordering with long-running container workflows, and multi-region API backends with Aurora DSQL. In every case, ECS handled the orchestration cleanly while I focused on the application logic.

If you're running containers on AWS and haven't looked at ECS recently, the current feature set is worth a fresh evaluation. Start with a Fargate service behind an ALB, enable Container Insights, and go from there.

Resources

- Amazon ECS Documentation

- Amazon ECS Best Practices Guide

- Serverless Data Processor - Step Functions + Fargate - Batch processing with ECS

- Serverless Pizza Ordering - Long-running Fargate container workflows

- Aurora DSQL Kabob Store - FastAPI on Fargate with multi-region database

- Step Functions + Fargate GitHub Repo - Full Terraform and Rust container code

- Serverless Pizza GitHub Repo - Full Terraform and container code

- AWS London Ontario User Group - Meetups, talks, and community for AWS builders in the London, Ontario area

- AWS London Ontario User Group YouTube - Recorded talks and presentations

Connect with me on X, Bluesky, LinkedIn, GitHub, Medium, Dev.to, or the AWS Community. Check out more of my projects at darryl-ruggles.cloud and join the Believe In Serverless community.

Comments

Loading comments...